Introduction to VMDirectPath

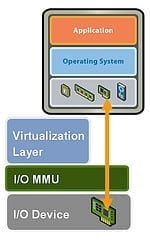

Although hardware independence is fundamental to many of the advantages of virtualization it also creates a problem when you need to connect a specific hardware device to a virtual machine. Although SCSI passthrough has been available in ESX for some time accessing other types of device, especially USB, has always required alternative solutions such as USB over IP hubs. With the release of vSphere4 VMware have introduced a new feature called VMDirectPath I/O which allows up to two PCI(e) devices on the host server to be connected to a Virtual Machine. Officially this is provided to reduce the latency and hence improve the performance of devices such as 10Gb NICs and Host Bus Adapters, in fact only a handful of such devices are supported by VMware. However in fact pretty much any PCI(e) device can be connected, just bear in mind that VMware will not help with any problems you may encounter.

So far, so good, but there are a number of things to consider before you can go ahead and connect a device, first of all the system requirements. VMDirectPath requires a host server which supports Intel’s VT-d or AMD’s IOMMU technology, which means the latest generation of chipsets so if your server is over a year old you’re probably out of luck. The second significant issue is that because you are directly connecting your virtual machine to physical hardware several key features will no longer be available on that VM, namely:

- vMotion and Storage vMotion

- Fault Tolerance

- Snapshots and VM suspend

- Device Hot Add

Provided that hasn’t put you off we’ll now look at how to do it, in this example mapping the USB2 root hub on an HP DL380 G6 server so an external USB drive can be connected to an XP VM.

Setting Up VMDirectPath

Configure the Host Server

Although you have already checked that your host server supports VT-d or IOMMU you should still check in the BIOS that the technology is enabled, if its not obvious where this setting is then consult the manufacturer’s documentation. Also make sure that the device you are planning to connect is installed in the server, in this example we will be using the USB2 root hub which is embedded in the chipset so that’s not an issue. However if you are connecting extra storage to the server check the boot controller order – I wasted 30 minutes wondering why ESXi wouldn’t load until I realized it was trying to boot off the external USB hard drive I’d connected for this test 🙂

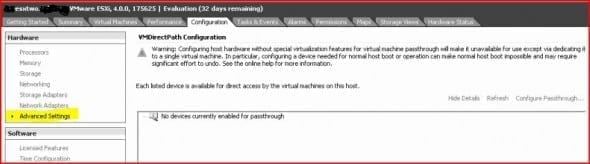

From now on we will be doing all the configuration via the vSphere client so boot your server up into ESXi4 (the procedure is the same for ESX) and connect the vSphere client to it. Select the server in the left pane and click the Configuration tab, then click Advanced Settings :

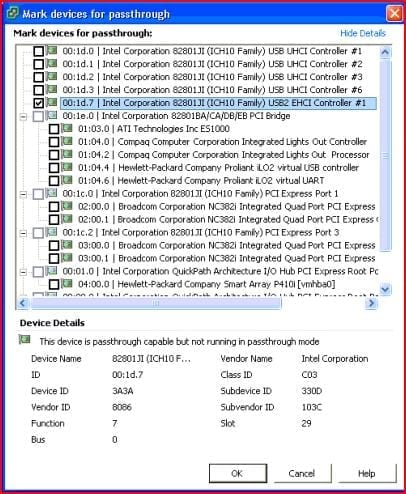

Now click Configure Passthrough… and you will be presented with a list of the PCI(e) devices available in the host server:

Hopefully the device you wish to connect will be quite obvious, otherwise some experimentation will be necessary as you can only connect a maximum of two devices to a virtual machine. On first inspection we have a bit of a problem when selecting our USB device in the example here, as there are five similar USB Controllers listed. On closer inspection though it can be seen that only one controller is marked as USB2, and it also says “EHCI” – I know that the external USB ports on the server are USB2 so I figure this has to be the one.

Check the box next to the device you wish to connect, then click Ok to apply the changes. The window will close and after a few moments you should see a “Update host configuration” task run successfully in the Task Pane, then the screen will list the device you have connected and a message saying you have to reboot the host server.

To reboot the host server simply right-click its entry in the left pane and from the drop-down menu select Reboot. Once it has completing restarting you should then see the PCI(e) device now has a green tag to show it is active and available for connection.

Adding the PCI(e) Device to a Virtual Machine

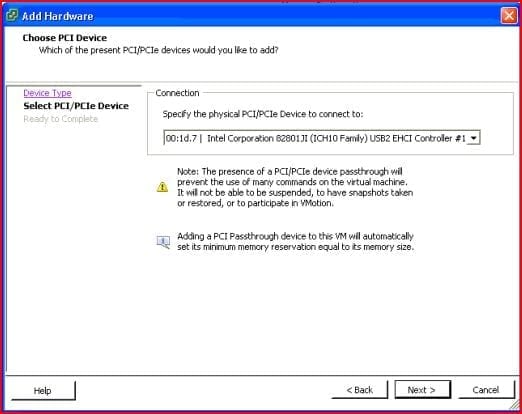

The virtual machine must be powered off in order to add a hardware device so if it is running shut it down and then select the Summary tab and click Edit Settings. The Virtual Machine Properties window should open on the Hardware tab so click Add and then select PCI Device and click Next.

You are now prompted to select the PCI(e) device to connect and the drop-down box should show those devices which you added when configuring the host server previously. If you connected more than one device then you will have to select the correct one, otherwise just click Next and then check the settings are correct on the next page and click Finish. This time you should see an “Updating virtual machine configuration” task running and when that has completed you will be able to start your VM.

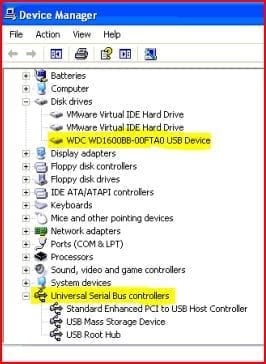

Assuming the VM is running a variant of Windows it should detect the new hardware automatically, just as if it was a physical machine that you had added the device to. Provided the driver is already available in Windows it will get installed automatically, otherwise you will be prompted to supply the driver files in the usual way. In this example I have connected the USB2 Controller to a Windows XP SP3 VM which recognizes it automatically, and also detects and installs the external hard drive connected to the server, as you can seen in this screenshot of the Windows Device Manager.

Conclusion

Since the new VT-d/IOMMU technology allows a direct mapping between the PCI(e) device and the virtual machine in theory you should be able to use any device which is compatible with your operating system. However as mentioned at the start of this article VMware have only certified it for use with a handful of devices and reports from users testing other hardware are scarce still. The Unofficial HCL over at vm-help.com does include a section on VMDirectPath tested components but at the time of writing only three were listed, hopefully this will improve with time. This certainly doesn’t mean that your device won’t work though, just be sure to test it thoroughly before using in a production environment.